Soft 3D Reconstruction for View Synthesis

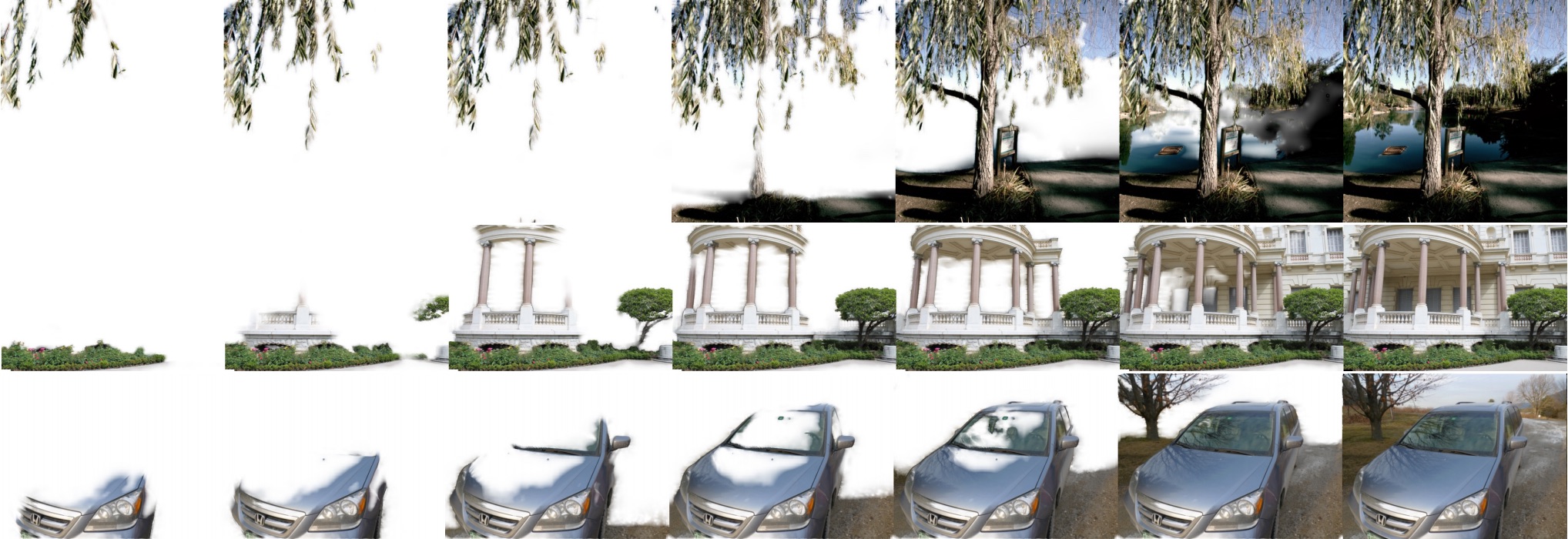

Progress of rendering virtual views of difficult scenes containing foliage, wide baseline occlusions and reflections. View ray and texture mapping ray visibility is modelled softly according to a distribution of depth probabilities retained from the reconstruction stage. This results in soft edges, soft occlusion removal, partial uncertainty of depth in textureless areas, and soft transitions between the dominant depths in reflections.

Abstract

We present a novel algorithm for view synthesis that utilizes a soft 3D reconstruction to improve quality, continuity and robustness. Our main contribution is the formulation of a soft 3D representation that preserves depth uncertainty through each stage of 3D reconstruction and rendering. We show that this representation is beneficial throughout the view synthesis pipeline. During view synthesis, it provides a soft model of scene geometry that provides continuity across synthesized views and robustness to depth uncertainty. During 3D reconstruction, the same robust estimates of scene visibility can be applied iteratively to improve depth estimation around object edges. Our algorithm is based entirely on O(1) filters, making it conducive to acceleration and it works with structured or unstructured sets of input views. We compare with recent classical and learning-based algorithms on plenoptic lightfields, wide baseline captures, and lightfield videos produced from camera arrays.

BibTeX

@article{Soft3DReconstruction,

author = {Eric Penner and Li Zhang},

title = {Soft 3D Reconstruction for View Synthesis},

booktitle = {ACM Transactions on Graphics (Proc. SIGGRAPH Asia)},

publisher = {ACM},

volume = {36},

number = {6},

year = {2017}

}

Acknowledgements

We thank our colleges and collaborators at Google and all authors of prior work for providing their results on several datasets. In particular we would like to thank Marc Comino, Daniel Oliveira, Yongbin Sun, Brian Budge, Henri Astre, Vadim Cugunovs, Jiening Zhan and Kay Zhu for contributing and reviewing code; Matt Pharr and his team for supplying imagery; David Lowe, Matthew Brown, Aseem Agarwala, Matt Pharr and Siggraph Asia reviewers for their feedback on the paper.